Product-Market Fit Is Overrated for Pricing

“Product-market fit” is one of the most popular phrases in startup and product circles. It tells you that someone wants what you’re selling. That’s useful.

“Product-market fit” is one of the most popular phrases in startup and product circles. It tells you that someone wants what you’re selling. That’s useful.

Let’s be honest. There’s a fine line between pricing smart and pricing sleazy. And most of us working in pricing walk that line regularly, sometimes

Most companies try to segment their markets by surface traits like industry, company size, age group, or geography. And while these can be useful, they

Pricing AI is one of the hottest topics in tech right now and also one of the hardest. The key challenge? Choosing the right pricing

I’ve been struggling to understand the difference between the Jobs to Be Done (JTBD) framework and my own approach of thinking in terms of problems

In pricing conversations, we often treat value and willingness to pay interchangeably. They are closely related, but they are not the same. Every pricing and

Many pricing professionals talk about understanding value, but few break it down into the architecture that drives buyer and company decisions. In our Context-Driven Pricing

When most companies talk about “market segments,” they really mean industries: aerospace, automotive, healthcare, etc. That’s easy, but it’s wrong. Industries are proxies, not segments.

Pricing is incredibly important. It is what makes a company viable. It drives your near-term and long-term revenue and profit growth. It is a major

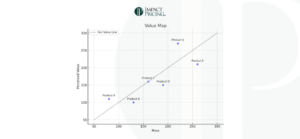

Value Maps seem to be a staple in pricing. Colleagues write about them. Many teach them. In theory, they are great. I don’t use them.